|

“Thinking of atmospheres also returns us to the breath, to the continuous and necessary exchange between subject and environment, a movement that forms a multiplicity existing within the space necessary for sound to sound, and for Being, in whatever form, to resonate” (Dyson, 2009:17).

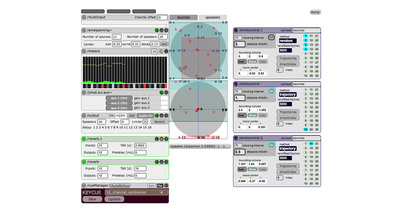

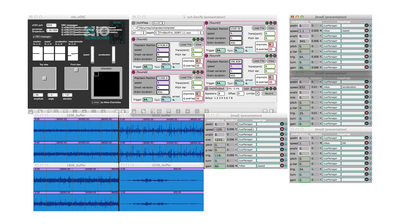

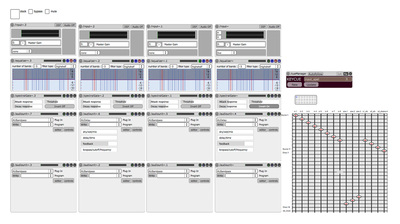

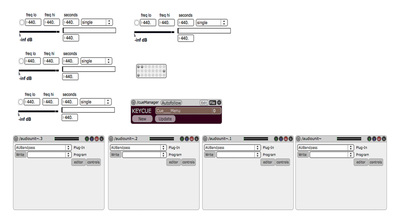

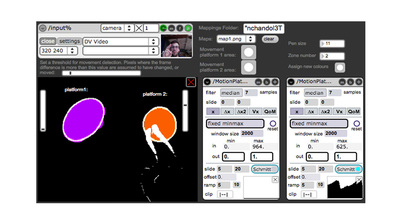

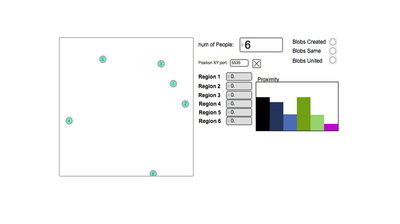

The most crucial component of Orbital Resonance was the creation of sound. The sound system consisted of eight speakers spread evenly around the space, four speakers above on the grid, two subwoofers on each side of the space. Additionally, we placed two transducers below the floor in the active space and one transducer under each platform, to create a fully immersive sonic environment. Originating from our bodies, the sound created a continuous feedback loop, ever expanding in the environment. During our residency at Concordia's BlackBox, we experimented with different techniques, spatialisation systems and sensors to explore what meaningful associations and sonic transformations could arise from our bodies in sound and motion. This process informed what areas of insight and creation we wanted to investigate. In one experiment, we began to improvise with just our bodies with no technology in the space, motivated by various words and phrases relating to our thematic concepts of breathe, heartbeat, muscle, speed, and spatial relationships. There were no sensors and no augmentation of the space, just us. Eventually, after increasing our familiarity with our own bodies and each other in the space, we started to add layers through other apparatuses. We experimented with the xOSC wireless board (that includes a built-in gyroscope, accelerometer, and magnetometer sensors) on our bodies. We placed the sensor on different parts of our bodies (neck, waist, ankles, wrists, spine) while changing movement qualities such as acceleration, deceleration, orientation, and more. The sensor was mapped to different sonic qualities and sources (coming from our voice recordings of various textures and words). The designed patches created in Max/MSP extracted the data from the built-in xOSC sensors. We played with different modules and techniques of the sensor and of our movement to create different types of mapping, avoiding the common one-to-one correlation. The exploration informed our process in creating unique relationships between our bodies, sound, and sensors all within the responsive environment. In another experiment, we re-appropriated techniques developed by Navid Navab at the Topological Media Lab of gesture tracking and sonification through a collection of Max/MSP objects. See Projects and Software for examples and more information. Furthermore, by using infrared tracking and motion detection technology, we mapped different sonic qualities and sources to our movements in the space. Although not as interesting, we quickly shifted this idea to create mapped sonic zones within the active space, creating amplified and silenced micro-worlds. For example, we mapped the platforms as active spaces where sound would be heard. When activating the space by our bodies, dependent on the position and quality of our movement, the sound would shift in texture and clarity. In turn, a feedback loop was created as the sound began to inform our movement qualities and position as well. In a second iteration of this experiment, we started to explore another issue important in our research. Performance and Interactive Art rely heavily on the sense of sight, but what would happen if we eliminated the visual feedback of our bodies and light in the space? How do we retain, process, and disseminate information from our other senses? In this exercise, we blindfolded each other to exist in darkness with only audio feedback being generated from the mapped zones of sound. We explored the space relying on our other senses, communicating via touch and our own breath and noise of the active spaces. We created different relationships, correlations in space, and interactions between each other and the different sonic outputs. The learning potentials and possibilities were endless and another avenue we are continuing to explore. In addition, another experiment in the works is to discover different methods of sonifying correlated movement. Through our tracking system, we are able to extract distance information between 1-6 people. We are able to map different sounds to delineate the changing distances between them and overall in the space. |